The Metaverse: Policing the Virtual Frontier

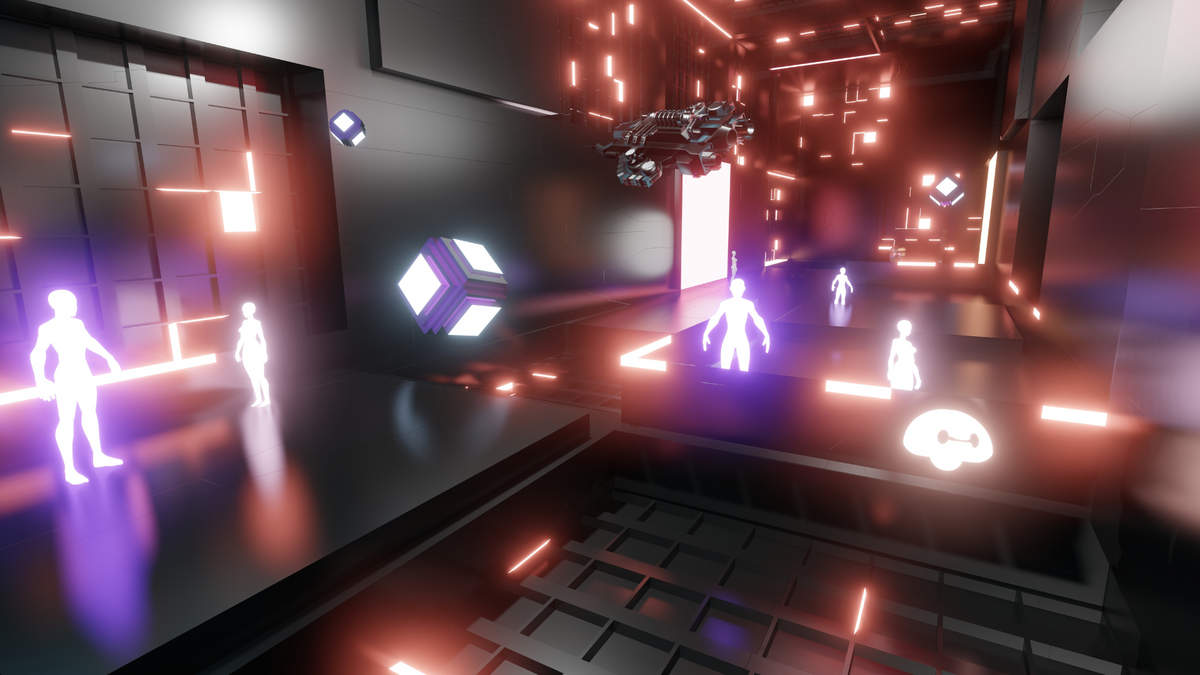

With the rise of virtual and augmented reality technologies, the concept of a "metaverse" - a shared virtual world where users can interact with each other and digital objects - is becoming increasingly closer to reality.

With the rise of virtual and augmented reality technologies and Web3, the concept of a "Metaverse" - a shared virtual world where users can interact with each other and digital objects, is becoming increasingly closer to reality, but we're not quite there just yet with the interoperability.

With this new frontier comes new challenges, particularly with regards to policing and ensuring the safety of users within the Metaverse, I previously wrote about some aspects of this here.

So, in this article, we'll explore the question of how to police the Metaverse and what role platform owners can play in helping to create a safe and secure virtual environment.

The Metaverse and its Challenges

The Metaverse is a concept that has been popularized in science fiction for decades, but with advancements in virtual and augmented reality technologies and Web3, it's becoming a tangible reality. The metaverse is a shared digital space where users can interact with each other and digital objects, creating a sense of community and shared experience. However, as with any digital environment, or social media platform, there are also potential dangers and risks, such as cyber bullying, harassment, and even criminal activity.

Platform Owners: Key Players in Metaverse Security

Platform owners - such as companies behind virtual and augmented reality technologies - play a critical role in ensuring the safety and security of users within the metaverse. These companies have a responsibility to create and maintain secure virtual environments, and to implement measures to prevent and address any negative or harmful behavior. This can include developing user policies and guidelines, as well as implementing technical measures to monitor and detect any malicious activity, not to mention a clear misson and a set of ethics.

What Platform Owners Can Do to Help

So, what can platform owners do to help police the Metaverse and create a safe and secure virtual environment? Here are some key steps that can be taken:

- Develop and enforce user policies and guidelines: Platform owners should create clear policies and guidelines for user behavior, and enforce these policies consistently and fairly. This can include guidelines on appropriate conduct, content restrictions, and consequences for violations. Much like we have in place for our current VR multiplayer games and chatrooms.

- Implement technical measures: Platform owners can implement technical measures to monitor and detect any malicious activity within the Metaverse. This can include using artificial intelligence and machine learning algorithms to detect and red flag any potential threats, as well as using data analytics to track and investigate any suspicious activity. I also believe the use of sentiment analysis will have a key role in this endeavour.

- Foster a culture of community: Platform owners can help to foster a positive and supportive community within the Metaverse by promoting positive behavior and promoting the importance of safety and security. This can include offering resources and support for users who have experienced negative behavior, as well as promoting positive values such as respect, kindness, and inclusivity. Well trained community managers will be key in the role to supplement any AI.

- Work with law enforcement: Platform owners should also be willing to work with law enforcement to investigate and address any criminal activity within the Metaverse. This can include sharing information and data to support investigations, and cooperating with law enforcement in any criminal proceedings. Hopefully, the very threat and known existence of law enforcement should nip in the bud a large percentage of potentail foul play.

The rise of the Metaverse presents new and exciting opportunities, but also new challenges, particularly with regards to policing and ensuring the safety of users within the virtual world. Platform owners have a critical role to play in helping to create a safe and secure metaverse, and by implementing the steps outlined above, they can help to ensure that the Metaverse is a positive and supportive environment for all users.

The potential is huge, but so is the potential for bad actors.